Spark Read Table

Spark Read Table - Web parquet is a columnar format that is supported by many other data processing systems. Reads from a spark table into a spark dataframe. Web spark filter () or where () function is used to filter the rows from dataframe or dataset based on the given one or multiple conditions or sql expression. Web the core syntax for reading data in apache spark dataframereader.format(…).option(“key”, “value”).schema(…).load() dataframereader is the foundation for reading data in spark, it can be accessed via the attribute spark.read… Web this is done by setting spark.sql.hive.convertmetastoreorc or spark.sql.hive.convertmetastoreparquet to false. Web reading data from sql tables in spark by mahesh mogal sql databases or relational databases are around for decads now. This includes reading from a table, loading data from files, and operations that transform data. Web most apache spark queries return a dataframe. Index_colstr or list of str, optional, default: Read a spark table and return a dataframe.

In this article, we are going to learn about reading data from sql tables in spark. However, since hive has a large number of dependencies, these dependencies are not included in the default spark. Web reads from a spark table into a spark dataframe. Web example code for spark oracle datasource with java. In order to connect to mysql server from apache spark… Web this is done by setting spark.sql.hive.convertmetastoreorc or spark.sql.hive.convertmetastoreparquet to false. Index column of table in spark. Often we have to connect spark to one of the relational database and process that data. The spark catalog is not getting refreshed with the new data inserted into the external hive table. There is a table table_name which is partitioned by partition_column.

Many systems store their data in rdbms. Usage spark_read_table( sc, name, options = list(), repartition = 0, memory = true, columns =. Loading data from an autonomous database at the root compartment: The case class defines the schema of the table. Web the core syntax for reading data in apache spark dataframereader.format(…).option(“key”, “value”).schema(…).load() dataframereader is the foundation for reading data in spark, it can be accessed via the attribute spark.read… Often we have to connect spark to one of the relational database and process that data. Web spark filter () or where () function is used to filter the rows from dataframe or dataset based on the given one or multiple conditions or sql expression. Dataset oracledf = spark.read ().format (oracle… For instructions on creating a cluster, see the dataproc quickstarts. In order to connect to mysql server from apache spark…

Spark SQL Tutorial 2 How to Create Spark Table In Databricks

In order to connect to mysql server from apache spark… Index_colstr or list of str, optional, default: The following example uses a.</p> There is a table table_name which is partitioned by partition_column. However, since hive has a large number of dependencies, these dependencies are not included in the default spark.

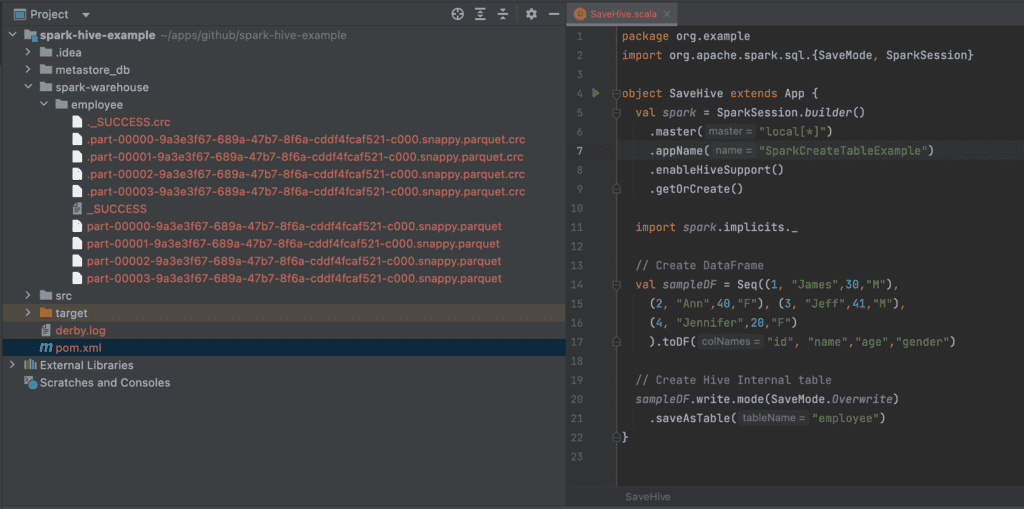

Spark SQL Read Hive Table Spark By {Examples}

Web this is done by setting spark.sql.hive.convertmetastoreorc or spark.sql.hive.convertmetastoreparquet to false. Usage spark_read_table( sc, name, options = list(), repartition = 0, memory = true, columns =. The case class defines the schema of the table. For instructions on creating a cluster, see the dataproc quickstarts. Azure databricks uses delta lake for all tables by default.

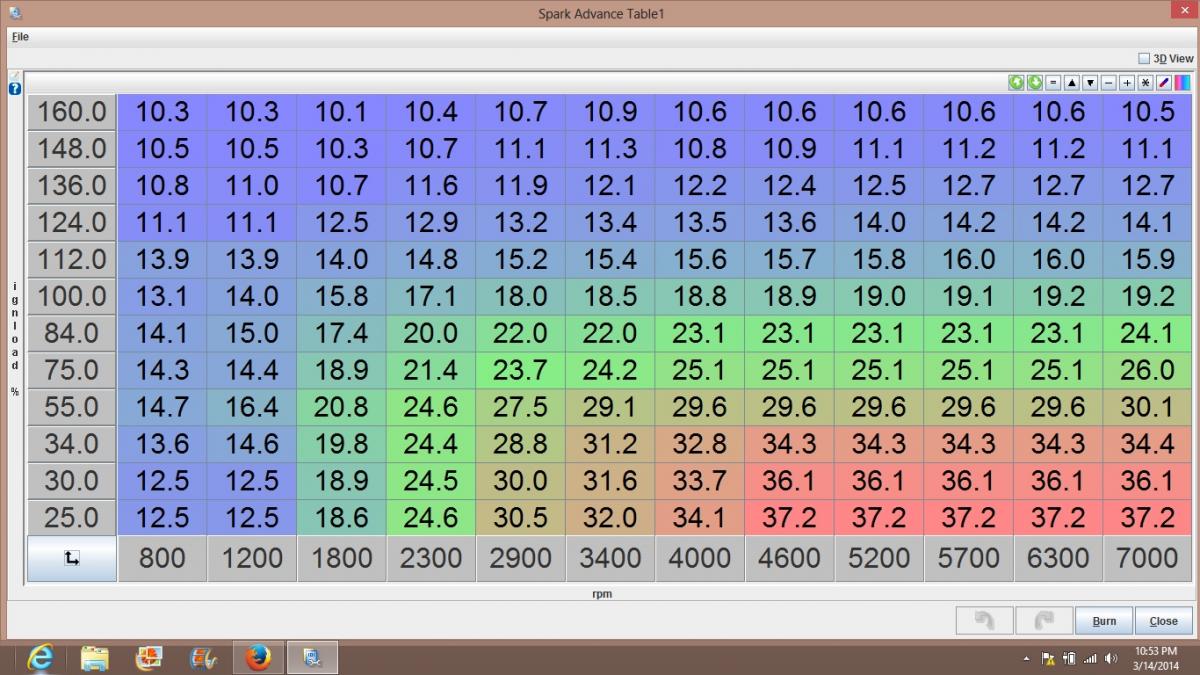

My spark table. Miata Turbo Forum Boost cars, acquire cats.

Reading tables and filtering by partition ask question asked 3 years, 9 months ago modified 3 years, 9 months ago viewed 3k times 2 i'm trying to understand spark's evaluation. // note you don't have to provide driver class name and jdbc url. Web spark sql provides spark.read ().csv (file_name) to read a file or directory of files in csv.

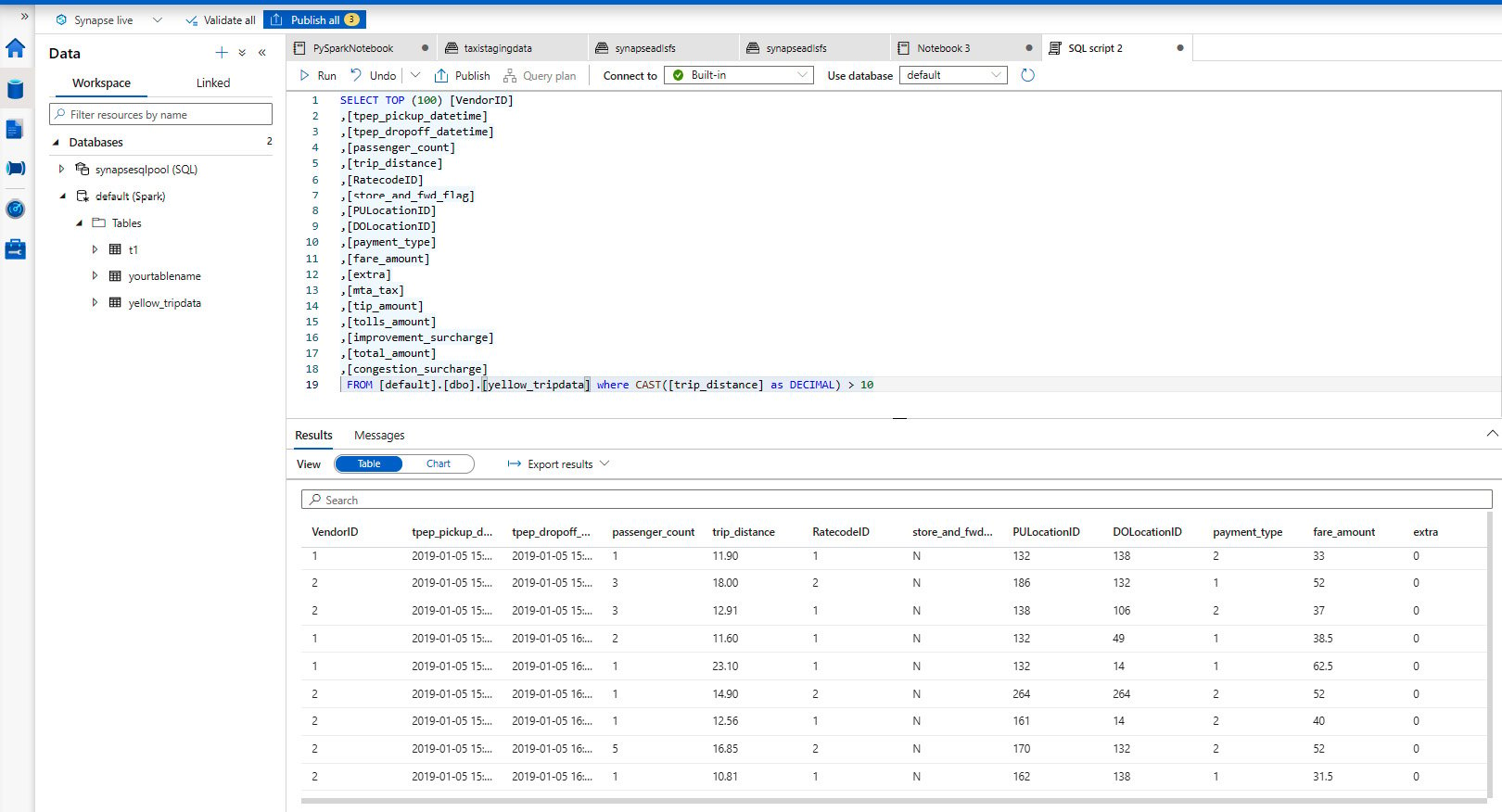

Reading and writing data from ADLS Gen2 using PySpark Azure Synapse

Usage spark_read_table ( sc, name, options = list (), repartition = 0 , memory = true , columns = null ,. Union [str, list [str], none] = none) → pyspark.pandas.frame.dataframe [source] ¶. Web aug 21, 2023. In the simplest form, the default data source ( parquet. Web parquet is a columnar format that is supported by many other data processing.

The Spark Table Curved End Table or Night Stand dust furniture*

In order to connect to mysql server from apache spark… Web read data from azure sql database write data into azure sql database show 2 more learn how to connect an apache spark cluster in azure hdinsight with azure sql database. Web reading data from sql tables in spark by mahesh mogal sql databases or relational databases are around for.

Spark Table Miata Turbo Forum Boost cars, acquire cats.

In the simplest form, the default data source ( parquet. Index_colstr or list of str, optional, default: That's one of the big. // note you don't have to provide driver class name and jdbc url. Interacting with different versions of hive metastore;

Spark Essentials — How to Read and Write Data With PySpark Reading

The following example uses a.</p> Reading tables and filtering by partition ask question asked 3 years, 9 months ago modified 3 years, 9 months ago viewed 3k times 2 i'm trying to understand spark's evaluation. Web example code for spark oracle datasource with java. Web the core syntax for reading data in apache spark dataframereader.format(…).option(“key”, “value”).schema(…).load() dataframereader is the foundation.

Spark Plug Reading 101 Don’t Leave HP On The Table! Hot Rod Network

In order to connect to mysql server from apache spark… In the simplest form, the default data source ( parquet. Index column of table in spark. However, since hive has a large number of dependencies, these dependencies are not included in the default spark. The following example uses a.</p>

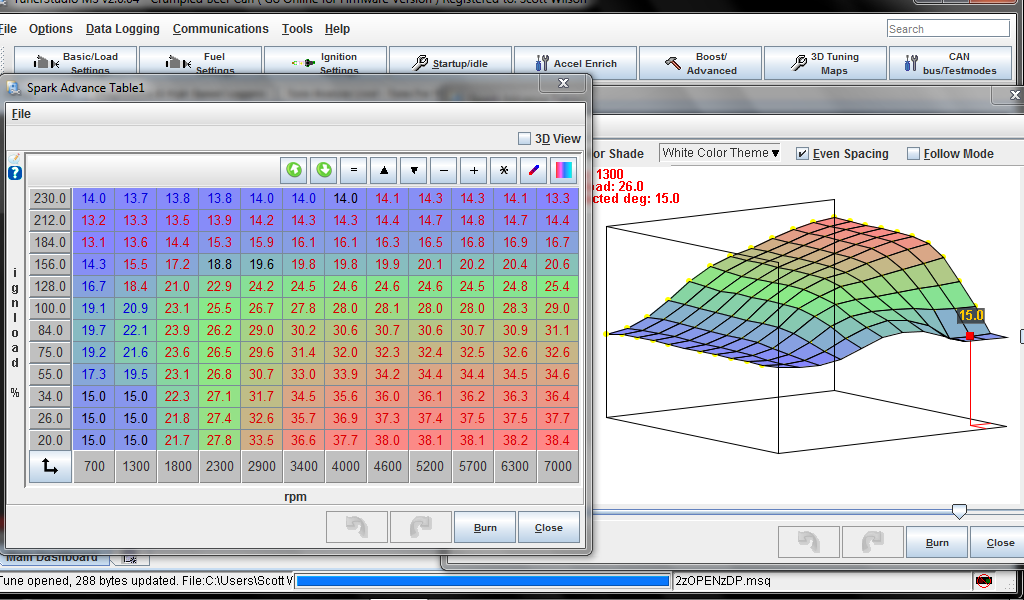

Spark Plug Reading 101 Don’t Leave HP On The Table!

Web read a table into a dataframe. Spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Index column of table in spark. This includes reading from a table, loading data from files, and operations that transform data. Spark sql also supports reading and writing data stored in apache hive.

Spark Plug Reading 101 Don’t Leave HP On The Table! Hot Rod Network

Web example code for spark oracle datasource with java. However, since hive has a large number of dependencies, these dependencies are not included in the default spark. Usage spark_read_table ( sc, name, options = list (), repartition = 0 , memory = true , columns = null ,. Reading tables and filtering by partition ask question asked 3 years, 9.

Web Example Code For Spark Oracle Datasource With Java.

Read a spark table and return a dataframe. The following example uses a.</p> In the simplest form, the default data source ( parquet. Web spark sql provides spark.read ().csv (file_name) to read a file or directory of files in csv format into spark dataframe, and dataframe.write ().csv (path) to write to a.

Reads From A Spark Table Into A Spark Dataframe.

You can use where () operator instead of the filter if you are. Web the scala interface for spark sql supports automatically converting an rdd containing case classes to a dataframe. // note you don't have to provide driver class name and jdbc url. Spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data.

Web Spark Filter () Or Where () Function Is Used To Filter The Rows From Dataframe Or Dataset Based On The Given One Or Multiple Conditions Or Sql Expression.

Web read data from azure sql database write data into azure sql database show 2 more learn how to connect an apache spark cluster in azure hdinsight with azure sql database. Web parquet is a columnar format that is supported by many other data processing systems. Dataset oracledf = spark.read ().format (oracle… Usage spark_read_table ( sc, name, options = list (), repartition = 0 , memory = true , columns = null ,.

Run Sql On Files Directly.

You can easily load tables to dataframes, such as in the following example: The spark catalog is not getting refreshed with the new data inserted into the external hive table. Web reads from a spark table into a spark dataframe. Web spark.read.table function is available in package org.apache.spark.sql.dataframereader & it is again calling spark.table function.