Dask Read Csv

Dask Read Csv - Df = dd.read_csv(.) # function to. Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: Web typically this is done by prepending a protocol like s3:// to paths used in common data access functions like dd.read_csv: Web dask dataframes can read and store data in many of the same formats as pandas dataframes. Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: >>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: List of lists of delayed values of bytes the lists of bytestrings where each. In this example we read and write data with the popular csv and. It supports loading many files at once using globstrings:

In this example we read and write data with the popular csv and. >>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: Df = dd.read_csv(.) # function to. Web dask dataframes can read and store data in many of the same formats as pandas dataframes. Web typically this is done by prepending a protocol like s3:// to paths used in common data access functions like dd.read_csv: List of lists of delayed values of bytes the lists of bytestrings where each. Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: It supports loading many files at once using globstrings:

Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: Df = dd.read_csv(.) # function to. Web typically this is done by prepending a protocol like s3:// to paths used in common data access functions like dd.read_csv: Web dask dataframes can read and store data in many of the same formats as pandas dataframes. Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: List of lists of delayed values of bytes the lists of bytestrings where each. It supports loading many files at once using globstrings: In this example we read and write data with the popular csv and. >>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files:

Dask Read Parquet Files into DataFrames with read_parquet

>>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: It supports loading many files at once using globstrings: Web dask dataframes can read and store data in many of the same formats as pandas dataframes. Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers.

READ CSV in R 📁 (IMPORT CSV FILES in R) [with several EXAMPLES]

It supports loading many files at once using globstrings: Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: In this example we read and write data with.

dask Keep original filenames in dask.dataframe.read_csv

List of lists of delayed values of bytes the lists of bytestrings where each. Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: Web dask dataframes can read and store data in many of the same formats as pandas dataframes. Web you could run it using dask's chunking and maybe get a.

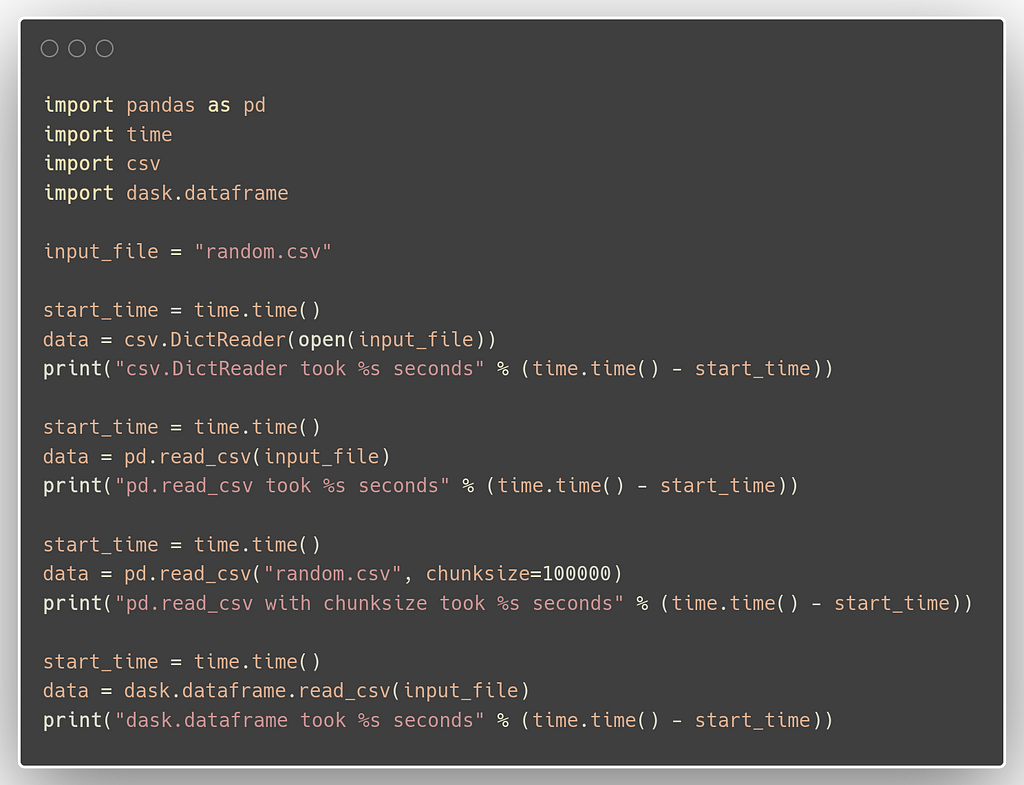

Best (fastest) ways to import CSV files in python for production

Web dask dataframes can read and store data in many of the same formats as pandas dataframes. Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: >>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: Web typically this is done by prepending a protocol like s3:// to paths.

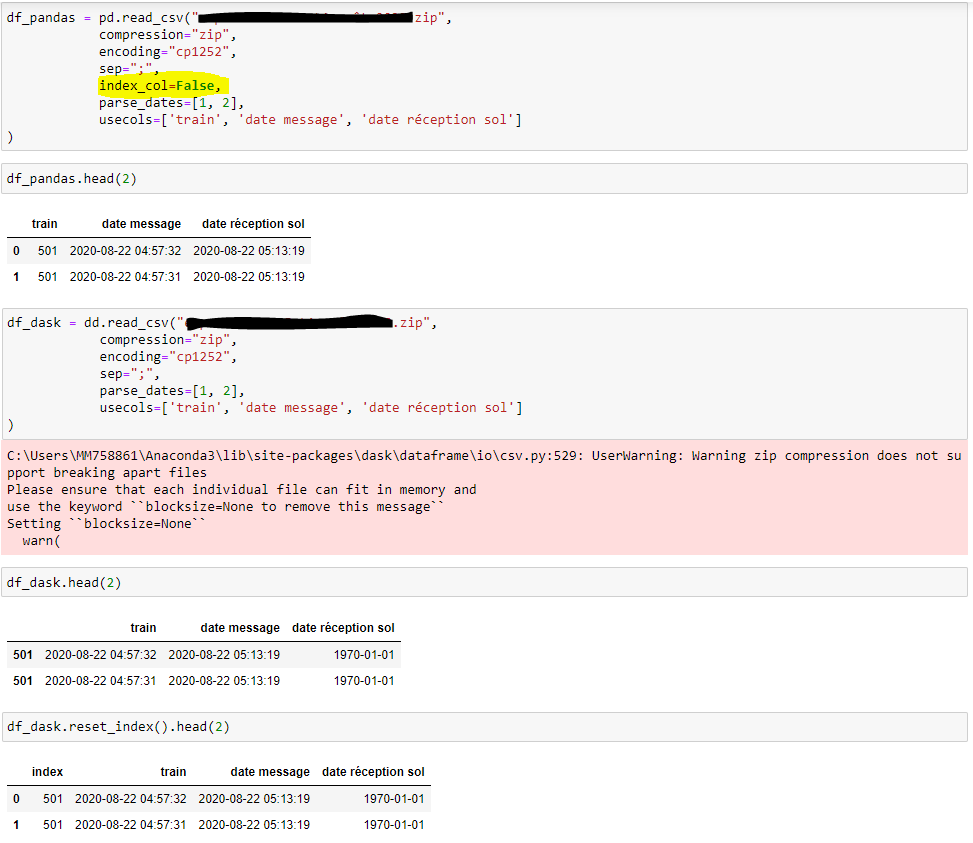

pandas.read_csv(index_col=False) with dask ? index problem Dask

List of lists of delayed values of bytes the lists of bytestrings where each. Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: Web dask dataframes can read and store data in many of the same formats as pandas dataframes. In this example we read and write data with the popular csv.

Reading CSV files into Dask DataFrames with read_csv

Web typically this is done by prepending a protocol like s3:// to paths used in common data access functions like dd.read_csv: Df = dd.read_csv(.) # function to. Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: Web read csv files into a dask.dataframe this.

How to Read CSV file in Java TechVidvan

In this example we read and write data with the popular csv and. Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: List of lists of delayed values of bytes the lists of bytestrings where each. Df = dd.read_csv(.) # function to. It supports loading many files at once using globstrings:

Reading CSV files into Dask DataFrames with read_csv

List of lists of delayed values of bytes the lists of bytestrings where each. >>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: Web read csv files into a dask.dataframe this.

[Solved] How to read a compressed (gz) CSV file into a 9to5Answer

>>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: Df = dd.read_csv(.) # function to. In this example we read and write data with the popular csv and. Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: Web dask dataframes.

dask.dataframe.read_csv() raises FileNotFoundError with HTTP file

Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data: Df = dd.read_csv(.) # function to. Web dask dataframes can read and store data in many of the same formats as pandas dataframes. In this example we read and write data with the popular csv.

Df = Dd.read_Csv(.) # Function To.

Web read csv files into a dask.dataframe this parallelizes the pandas.read_csv () function in the following ways: In this example we read and write data with the popular csv and. Web typically this is done by prepending a protocol like s3:// to paths used in common data access functions like dd.read_csv: Web you could run it using dask's chunking and maybe get a speedup is you do the printing in the workers which read the data:

Web Dask Dataframes Can Read And Store Data In Many Of The Same Formats As Pandas Dataframes.

>>> df = dd.read_csv('myfiles.*.csv') in some cases it can break up large files: It supports loading many files at once using globstrings: List of lists of delayed values of bytes the lists of bytestrings where each.

![READ CSV in R 📁 (IMPORT CSV FILES in R) [with several EXAMPLES]](https://r-coder.com/wp-content/uploads/2020/05/read-csv-r.png)